Introduction: Testing Challenges of 10G Ethernet

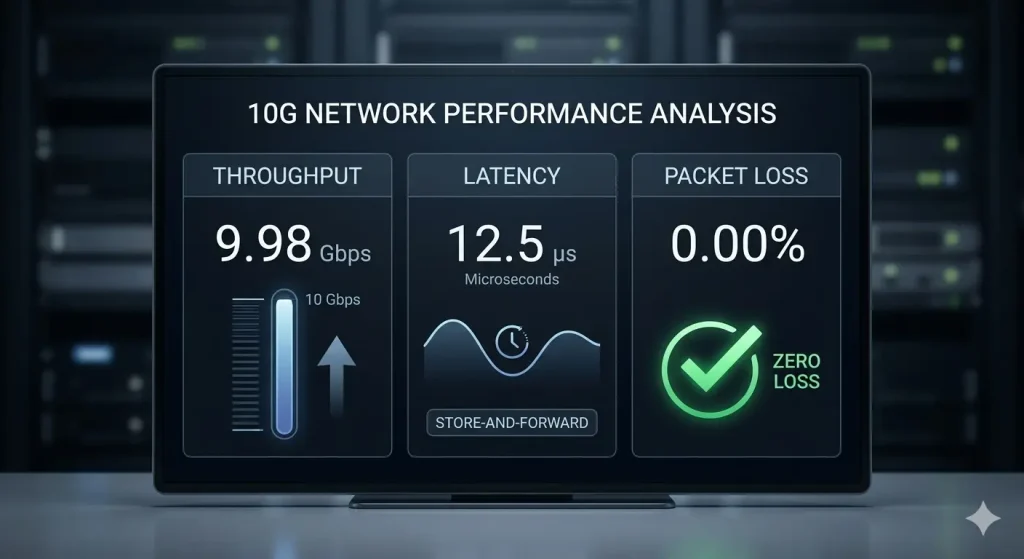

As data centers, enterprise core networks, and telecommunications-grade carrier networks evolve towards 400G and even higher bandwidths, 10G Ethernet has long transitioned from being a “cutting-edge technology” to a “standard configuration” within network infrastructure. However, increased bandwidth does not guarantee improved performance. As an engineer long engaged in the research and development of network test instruments, I understand that even at 10G or higher wire-speed traffic rates, the three metrics—throughput, latency, and packet loss rate—remain the “golden triangle” for evaluating the performance of network devices (DUT) and network systems (SUT).

In this article, drawing on practical experience with the TFN T3000A 10G Ethernet Tester, I will delve into the testing principles and analytical logic behind these three core indicators, hoping to provide some practical reference for network operations engineers and network equipment testing personnel.

Throughput: More Than Just “Utilizing Full Bandwidth”

Throughput testing aims to determine the maximum forwarding rate a device under test can achieve without dropping any data packets. According to the RFC 2544 standard, throughput is found by sending traffic at specific frame sizes (e.g., 64, 128, 256, 512, 1024, 1280, 1518 bytes) and using a binary search algorithm to find the highest rate with zero packet loss.

In a 10G Ethernet environment, simply focusing on “whether wire speed is achieved” is insufficient. When testing with the T3000A, we typically pay close attention to performance across different frame sizes: the smaller the frame size, the greater the pressure on the device’s forwarding engine and chip processing capabilities. For example, wire-speed forwarding of 64-byte small frames poses a significant challenge to a device’s CPU or NPU (Network Processor). If a device’s throughput falls short, it often indicates a bottleneck in its switching architecture or insufficient table capacity.

In practical engineering, we also introduce Back-to-Back (Burst) testing to evaluate the device’s buffering capability. By sending burst traffic at a specific rate using the T3000A, we observe the device’s processing ability under instantaneous high load. This is crucial for analyzing network performance in scenarios like video streaming or data center burst traffic.

Latency: A Microsecond-Level Contest

Latency is a key metric for network quality, especially critical in highly real-time applications such as financial trading, VoIP, and cloud gaming. For 10G Ethernet, latency is typically measured in microseconds (μs) or even nanoseconds (ns).

Depending on the testing methodology, latency can be categorized as store-and-forward latency or bit-forwarding latency. When performing RFC 2544 latency tests, the T3000A timestamps packets and precisely measures the time difference between transmission and reception. A detail often overlooked is that testing must be conducted based on successful throughput testing; otherwise, latency measured under congested conditions is meaningless.

Furthermore, jitter is a derivative indicator of latency. The introduction of the Y.1564 testing standard allows us to verify the latency characteristics of multiple services simultaneously within a single test case. For instance, using the multi-stream functionality of the T3000A, we can simulate the concurrent transmission of video and data streams and observe whether high-priority services (like voice) maintain low latency during congestion.

Packet Loss Analysis: More Than Just Statistics

The packet loss rate directly reflects network stability. The causes of packet loss are diverse: physical layer issues (e.g., insufficient optical module reception sensitivity, link CRC errors), congestion loss (queue overflow), or policy-based loss (ACL/QoS rate limiting).

When testing 10G Ethernet, We recommend that engineers focus not only on the packet loss percentage but also combine it with Bit Error Rate Testing (BERT) for precise localization. Taking the T3000A as an example, upon detecting packet loss, we first check if the received optical power of the module is within the threshold range. Secondly, by using BERT to inject different types of errors (such as FCS errors, IP checksum errors), we can verify the Device Under Test’s strategy for handling abnormal frames—whether it’s direct discard or erroneous forwarding.

Utilizing the Receive Filter Settings on the T3000A, we can accurately count packet loss within specific VLANs or IP flows. This is particularly effective for troubleshooting packet loss issues in MPLS VPNs or multi-tenant data center environments.

Conclusion: Evolution of Testing Tools and Testing Mindset

From the traditional RFC 2544 to the modern Y.1564, the methodology of network performance testing is constantly evolving. As test engineers, our tools must keep pace. The TFN T3000A 10G Ethernet Tester integrates BERT, RFC 2544, Y.1564, and multi-service generation capabilities, enabling comprehensive evaluation from the physical layer to the application layer on a single platform.

For anyone planning or operating a 10G Ethernet network, Our advice is: Don’t simply trust the “wire-speed forwarding” claims. Let rigorous test data speak for itself. Only by deeply understanding the intrinsic relationships between throughput, latency, and packet loss can we build truly reliable and high-performance 10G networks.

If you need a 10G Ethernet tester, the TFN T3000A is a great choice. This product has professional-grade testing capabilities, and is also lightweight, easy to operate, and has a variety of interfaces. If you are interested in this product, welcome to contact TFN Support Team:

Courriel : info@tfngj.com

WhatsApp : +86-18765219251

Ou vous pouvez laisser des messages Ici

FAQ

While 10G wire speed is easier to achieve with large packets, 64-byte small frames place the maximum stress on a device’s forwarding engine. Because smaller frames require the CPU or NPU to process a higher number of packets per second (pps) to hit the 10G threshold, this test reveals whether the device’s switching architecture or table capacity has a performance bottleneck.

Throughput testing (per RFC 2544) identifies the maximum rate at which a device can forward data with zero packet loss. In contrast, Back-to-Back (Burst) testing measures the device’s buffering capability. It sends a burst of traffic at full wire speed to see how many frames the device can handle before its internal buffers overflow, which is essential for evaluating performance in high-burst environments like data centers.

Les TFN T3000A precisely measures latency by timestamping packets at the point of transmission and reception. It can distinguish between store-and-forward and bit-forwarding latency, typically measuring in microseconds (μs). To ensure the results are meaningful, these measurements should be taken while the network is running within its confirmed throughput limits to avoid “congestion-induced” latency.

Packet loss isn’t always caused by a faulty device. It often stems from three areas:

Physical Layer: Low optical power or Bit Error Rate (BER) issues.

Congestion: Queue overflows when traffic exceeds buffer capacity.

Policy-based Loss: Incorrectly configured ACLs or QoS rate-limiting. Using tools like the T3000A’s BERT (Bit Error Rate Testing) helps engineers determine if the loss is due to physical link degradation or logical configuration errors.

While RFC 2544 is the industry standard for benchmarking single-stream performance, Y.1564 is designed for modern multi-service networks. It allows you to simulate and validate multiple traffic streams simultaneously (e.g., VoIP, video, and data). This ensures that high-priority services maintain low latency and zero packet loss even when the link is shared with lower-priority background data.